SelfPose: 3D Egocentric Pose Estimation from a Headset Mounted Camera

Abstract

Dataset

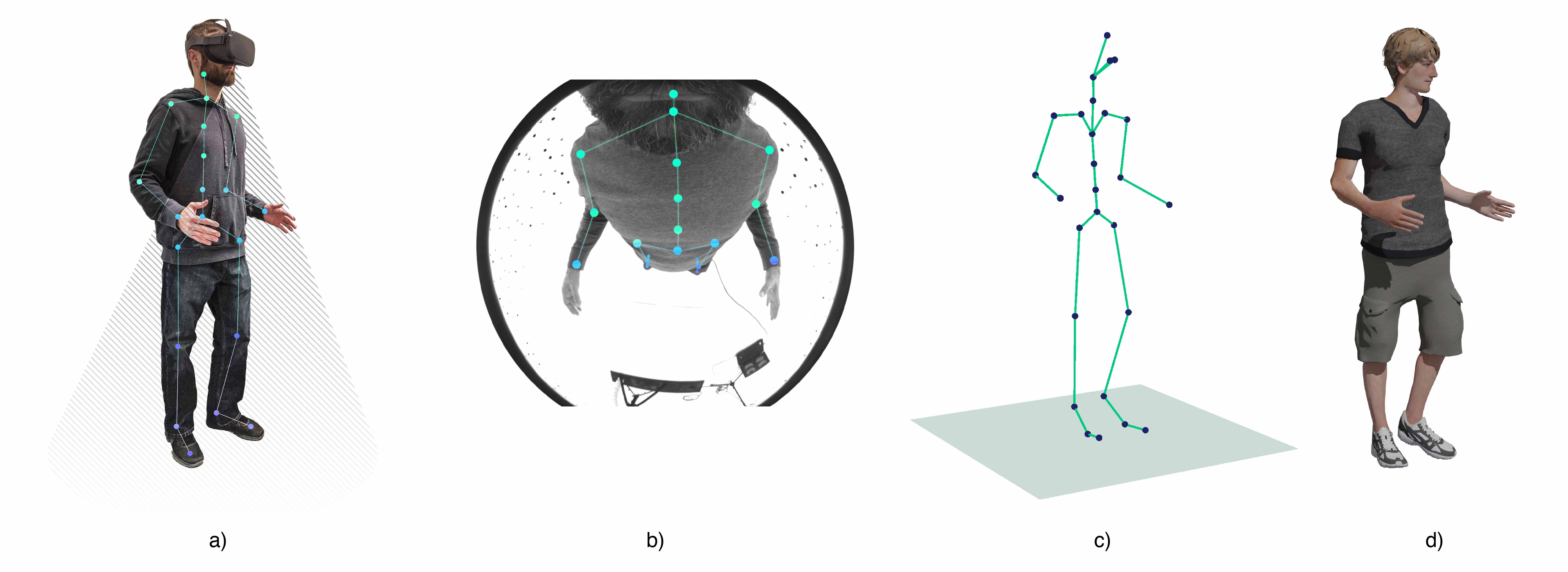

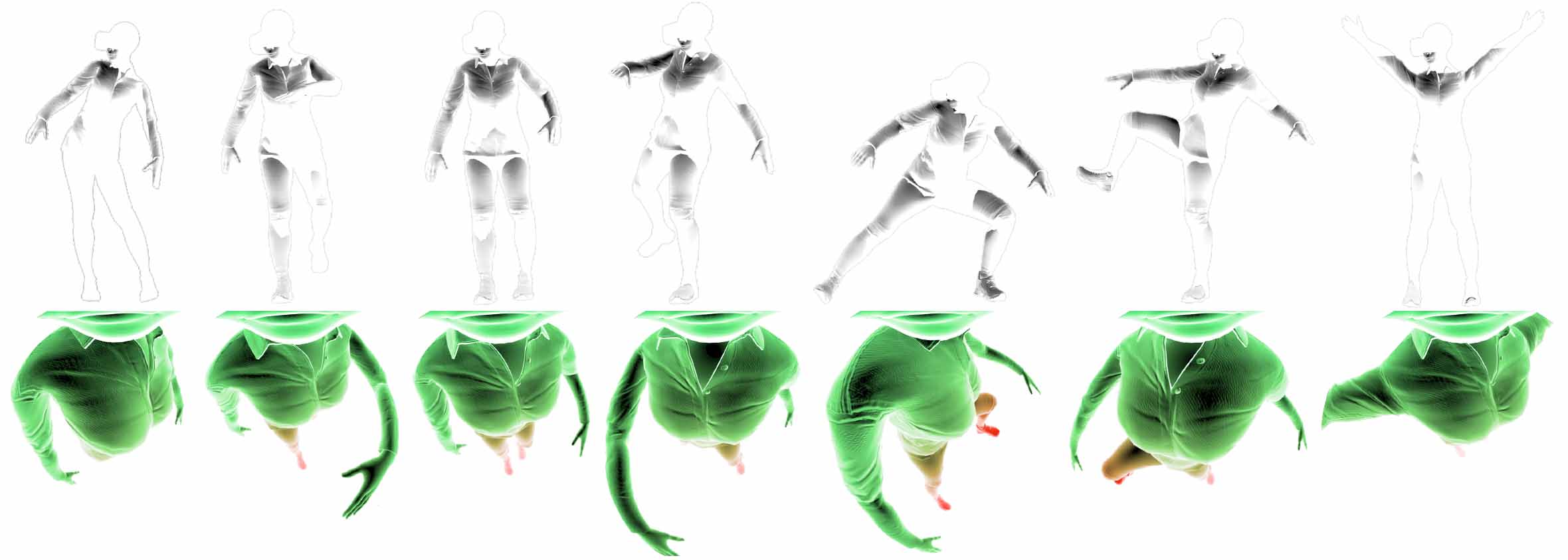

This figure illustrates the challenges faced in egocentric human pose estimation: severe self-occlusions, extreme perspective effects and lower pixel density for the lower body. The color gradient indicates the density of image pixels for each area of the body: green is higher pixel density, whereas red is lower density.

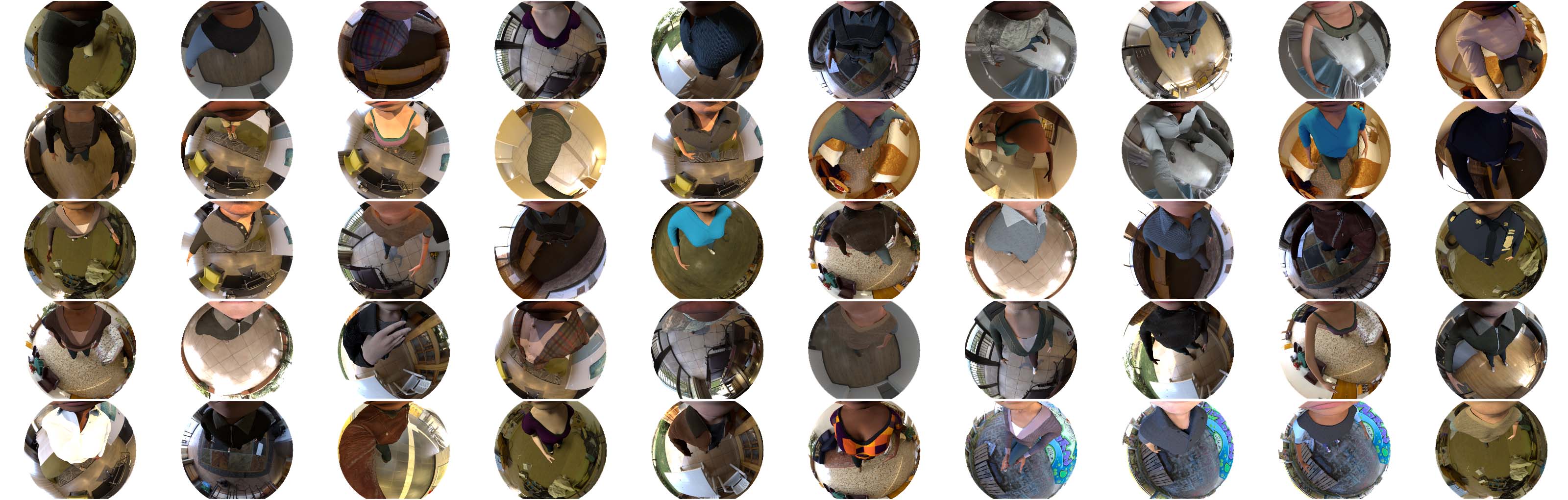

To faces these challenges we created a new new large-scale dataset, composed of 383K frames, focuses on realism, with augmentation of characters, environments, and lighting conditions. It departs from the only other existing monocular egocentric dataset from a headmounted fish-eye camera in its photorealistic quality , different viewpoint (since the images are rendered from a camera located on a VR HMD), and its high variability in characters, backgrounds and actions. A further advantage of this architecture is that the second branch is only needed at training time and can be removed at test time, guaranteeing the same performance and a faster execution.

Videos

Materials

BibTeX

@ARTICLE{9217955,

author={Tome, Denis and Alldieck, Thiemo and Peluse, Patrick and Pons-Moll, Gerard and Agapito, Lourdes and Badino, Hernan and De la Torre, Fernando},

journal={IEEE Transactions on Pattern Analysis and Machine Intelligence},

title={SelfPose: 3D Egocentric Pose Estimation from a Headset Mounted Camera},

year={2020},

volume={},

number={},

pages={1-1},

doi={10.1109/TPAMI.2020.3029700}

}

Patrick Peluse

Patrick Peluse